0. 前言#

GAN 原始 paper 中的损失很优美:

LGAN=minG maxD Ex∼pdata(x)[logD(x)]+Ez∼pz(z)[log(1−D(G(z)))]不过有的同学可能看的一头雾水, 我们来推导一下怎么来的.

1. 推导#

为方便推导 , 记 Generator 为 G , Discriminator 为 D.

1.1 Generator#

Generator 要做的事情呢 , 可以划分为以下几步:

[1] 首先, 从一个 noise 分布 sample 一笔数据 , 不妨假设 z∼pz(z)

[2] 然后 Generator 一顿操作, 输出 G(z)

[3] 目标: 尽可能的欺骗 Discriminator , 让其认为 G(Z) 是真的 , 具体表现为 D(G(Z)) 越接近 1 越好

因此, 用交叉熵表示 Generator 要优化的目标是:

L(G)=minimize ∑1∗log(D(G(z)))1+0∗log(1−D(G(z)))1=minimize ∑1∗log(D(G(z)))1=minimize −∑log(D(G(z)))=minimize ∑log(1−D(G(z)))

这里有个小trick , 当我们 update Generator 时 , Discriminator 是固定的 , 而 x∼pdata(x) 也是固定的 (就是我们真实样本训练集), 于是 Generator 有以下等价优化目标(当然可以加个 Expectation ~ ).

LG=minG Ex∼pdata(x)[logD(x)]+Ez∼pz(z)[log(1−D(G(z)))]1.2 Discriminator#

Discriminator 要做的事情呢 , 可以划分为以下几步:

[1] 首先, 从一个 真实 分布 sample 一笔数据 , 不妨假设 x∼px(x)

[2] 然后, 接受来自 Generator 的输出 G(Z)

[3] 将 x 和 G(Z) 都扔给 Discriminator

[4] 目标: 尽力分辨出 x 为真, G(Z) 为假.

因此, 用交叉熵表示 Discriminator 要优化的目标是:

L(D)=minimize ∑{1∗log(D(x))1+0∗log(1−D(x))1}x for true + ∑{0∗log(D(G(z)))1+1∗log(1−D(G(z)))1}G(z) for false=minimize ∑1∗log(D(x))1 + ∑1∗log(1−D(G(z)))1=minimize −∑log(D(x))−∑log(1−D(G(z))=maximize ∑log(D(x)) + ∑log(1−D(G(z))

啊, 美化一下, 加个 Expectation~ , 美滋滋

LD=maxD Ex∼pdata(x)[logD(x)]+Ez∼pz(z)[log(1−D(G(z)))]1.3 大一统#

Generator 要 minimize 下边这个式子, Discriminator 要 maximize 下边这个式子 . 叮~ 任务完成~

LGAN=minG maxD Ex∼pdata(x)[logD(x)]+Ez∼pz(z)[log(1−D(G(z)))]

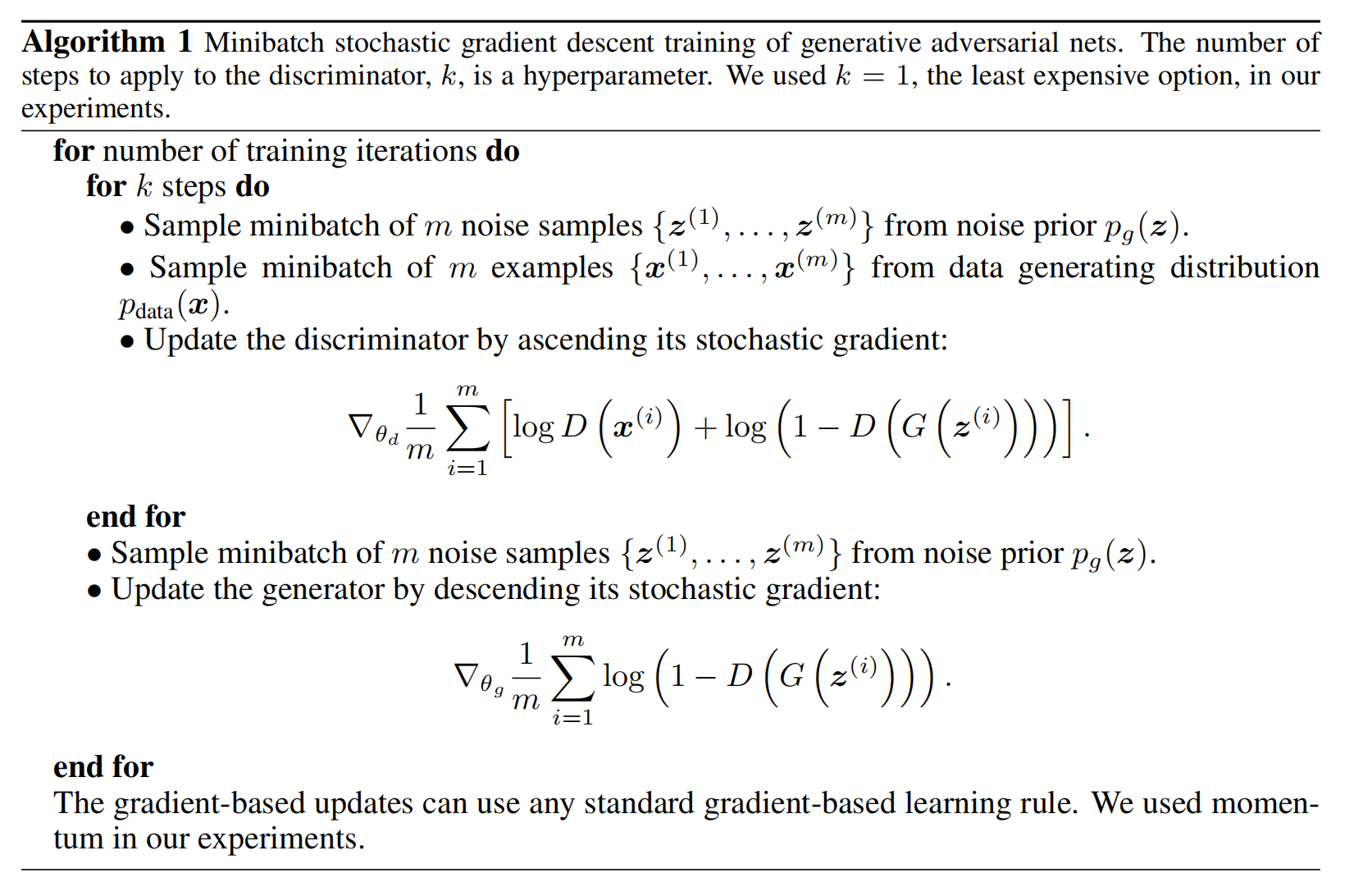

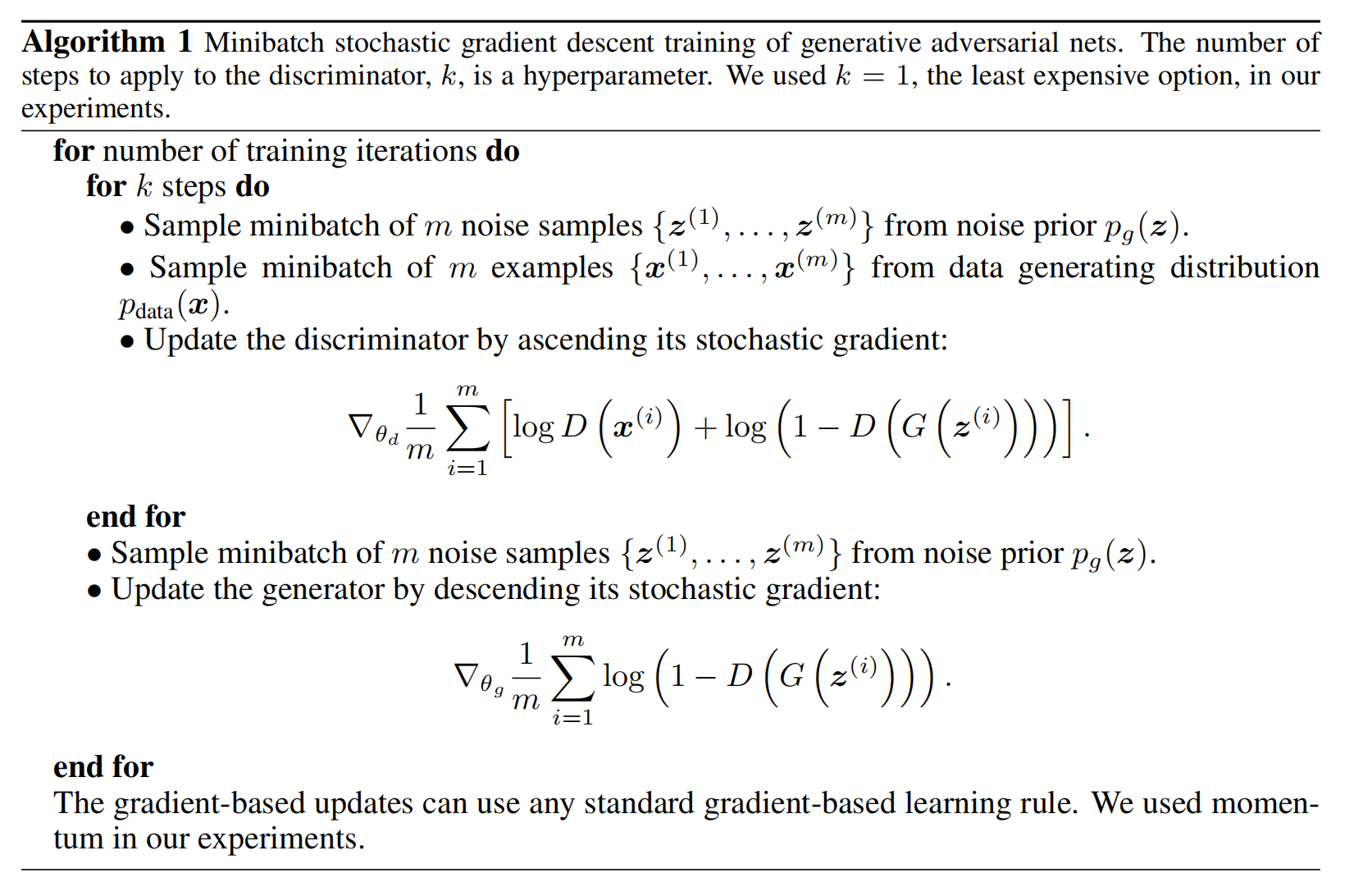

2. 算法步骤#

贴一个原始paper中的算法步骤, 不过可以看到 , 上边式子那个只是为了美观 , 实际更新的时候, 还是用原始的,